When you work with Linux, a graphical interface is almost never the path of least resistance. Most of the time you’re SSH’d into a server with no GUI at all, staring at a wall of text, trying to find the one log line that matters. The commands in this post are the ones I reach for when that wall of text shows up.

I’ve used every command on this list this week. Not last year. This week. They’re the boring, foundational tools that, once they’re in your fingers, make the difference between debugging a production issue in three minutes and three hours.

If you haven’t read my top 10 Linux commands post, start there. This one assumes you know your way around

ssh,cd, andman.

cat: read a file in one shot

cat stands for concatenate. It reads files sequentially and writes them to stdout, which is usually your terminal. The simplest, most-used command in the toolkit.

# Print a file

cat program.c

# Show line numbers

cat -n program.c

# Concatenate multiple files (this is what the name actually means)

cat program.c program.py

# Squeeze repeated blank lines into one

cat -s messy.txtA common antipattern is “cat | grep something”, piping a single file’s contents into grep. You can just do grep something program.c directly. The cat-then-grep pattern is fine for piping multiple files together, but for a single file it’s an extra process for no reason. (grep users on Stack Overflow will gently correct you on this every time. They’ve earned the right.)

There’s also a less-known cousin: tac (cat backwards), which prints a file with the last line first. I rarely need it, but the one time I did, it saved me from writing a small Python script to reverse a 2GB log file before tailing it.

less: read a file that won’t fit on the screen

cat works for small files. For a 4GB log file, cat will scroll past everything and leave you staring at the last screen with no way back. less is what you want instead.

# Open a file. Use arrow keys / Page Up / Page Down to scroll. q to quit.

less server.log

# Open the file at the END (great for tailing static logs)

less +G server.log

# Search forward for a regex inside less

# Press / then type the pattern, hit enter, then n for next match.less keeps the file open and only reads the chunks you scroll into. That’s why it can handle log files that are bigger than your RAM. It’s also the pager that man, git log, and most other tools use behind the scenes; learn less once and you’ve effectively learned how to navigate inside half the CLI tools you use.

diff: see what changed between two files

diff compares two text files line by line and prints what’s different. The classic use case: you have two API responses, two config files, two snapshots, and you want to know what changed. The flags I actually use:

# Default: line-by-line diff

diff A.txt B.txt

# Side-by-side, two columns (much easier to scan)

diff -y A.txt B.txt

# Ignore case

diff -i A.txt B.txt

# Ignore whitespace differences

diff -w A.txt B.txt

# Combine: side-by-side, case-insensitive, whitespace-insensitive

diff -y -i -w response_a.json response_b.json

# Recursive diff between two directories

diff -r dir-a/ dir-b/The pragmatic upgrade once you outgrow diff is git diff --no-index a.txt b.txt, which gives you colour, syntax-aware hunks, and the same workflow as everything else in your editor. But plain diff is on every Unix box ever made, and that universality is the point.

sort, uniq, wc, head, and tail: shaping and sampling

sort reorders lines. uniq deduplicates adjacent identical lines. wc counts words, lines, and bytes. They were designed to compose, and once you internalise the chain sort | uniq -c | sort -rn, you’ll use it for the rest of your career.

# Sort alphabetically

sort employees.txt

# Numeric sort (otherwise "10" sorts before "2")

sort -n numbers.txt

# Reverse sort

sort -rn numbers.txt

# Sort and dedupe (sort -u is shorthand for sort | uniq)

sort -u employees.txt

# Count occurrences of each unique line, sort by frequency descending

# (the famous "top N" pattern in any log file)

sort access.log | uniq -c | sort -rn | head -10

# Count lines, words, bytes in a file

wc -l server.log # just lines

wc -w document.txt # just wordsThat last sort | uniq -c | sort -rn | head chain is the closest thing to a magic spell in Unix. It tells you the top N most common lines in any input. Want the top 10 IPs hitting your server? Pipe the access log through it. Want the most-imported modules in a codebase? Pipe grep '^import' through it. The pattern adapts to almost anything.

head shows the first N lines of a file. tail shows the last N. tail -f (“follow”) keeps printing as new lines arrive, which is how you watch a log in real time.

# First 10 lines (default is 10)

head -n 10 server.log

# Last 10 lines

tail -n 10 server.log

# Lines 991-1000 of a 1000-line file (chain head and tail)

tail -n 1000 server.log | head -n 10

# Watch a log live as it's written

tail -f server.log

# Watch and filter for ERROR lines only

tail -f server.log | grep ERROR

# Survive log rotation (capital F instead of lowercase f)

tail -F /var/log/nginx/access.logtail -F (capital F) keeps following the file across logrotate operations. Lowercase -f will silently stop reading once the underlying inode changes. I learned this the slow way: I ran tail -f on a server overnight, came back the next morning, saw nothing new, and assumed nothing had broken. Plenty had broken. The log had rotated at midnight.

grep: the command that paid for itself a thousand times

grep searches text for patterns. The name is a hangover from the ed editor command g/re/p (“globally search a regular expression and print”), but nobody cares about the etymology; we just care that it’s fast and ubiquitous. The full GNU grep manual is the canonical reference if you want every flag.

The flags I use most:

| Flag | What it does |

|---|---|

-i | Case-insensitive |

-r | Recurse into subdirectories |

-n | Show line numbers |

-v | Invert the match (lines NOT matching) |

-c | Count matches |

-A n | Print n lines after each match |

-B n | Print n lines before each match |

-C n | Print n lines around each match |

-l | Print only the names of matching files |

-E | Extended regex (or use egrep) |

# Basic search

grep ERROR server.log

# Recursive, case-insensitive, with line numbers (the workhorse combo)

grep -rin 'todo' src/

# Show 5 lines of context around each match

grep -C 5 "Mr Robot" series.txt

# Count occurrences without printing them

grep -c "OutOfMemory" server.log

# Find which files contain a string (without printing the matches themselves)

grep -rl 'process.env.SECRET' .

# Filter another command's output: list nginx processes

ps -ef | grep -i nginx | grep -v grep

# Combine with tail to watch errors in real time

tail -f server.log | grep --line-buffered ERRORThe --line-buffered flag matters when piping through grep into another tool; without it, grep buffers output in chunks and your live view goes stuttery. If you find yourself fighting grep’s regex syntax, look at ripgrep (rg), which is faster, has better defaults, and respects .gitignore automatically. I still keep grep muscle memory because it’s installed everywhere; rg I install on every machine I use longer than a day.

sed: search and replace without opening the file

sed is a stream editor. It reads input, applies edits, writes output. Most days I use it for exactly one thing: find and replace.

# Replace first occurrence per line of 'abc' with 'xyz' (output to stdout)

sed 's/abc/xyz/' filename.txt

# Replace ALL occurrences per line (the g flag)

sed 's/abc/xyz/g' filename.txt

# Replace in place (edit the file directly). MacOS needs '' after -i.

sed -i 's/abc/xyz/g' filename.txt # GNU sed (Linux)

sed -i '' 's/abc/xyz/g' filename.txt # BSD sed (macOS)

# Print only the lines that were changed

sed -n 's/abc/xyz/p' filename.txt

# Delete blank lines

sed '/^$/d' filename.txt

# Delete lines matching a pattern

sed '/DEBUG/d' server.logThe macOS-vs-Linux -i quirk has burned me more times than I care to admit. If you’re writing a script that has to run on both, use sed -i.bak (works on both, leaves a .bak file you can delete after). Or, honestly, switch to perl -i -pe which is consistent across platforms and has saner regex syntax.

For complex multi-step text transformations, sed starts to feel like trying to parse HTML with a chainsaw. That’s when you reach for awk, Python, or jq (for JSON) instead. Don’t force sed to do something it wasn’t made for.

A pattern I use weekly: bulk-rename a string across a whole codebase. grep -rl OLDNAME . lists matching files, and you pipe that list into sed with xargs:

grep -rl 'OldServiceName' src/ | xargs sed -i 's/OldServiceName/NewServiceName/g'Two minutes of work, hundreds of edits. Always commit before running this so you can git diff and verify nothing exploded.

vim: the editor I keep meaning to leave

vim is the descendant of vi, which has shipped on basically every Unix system since 1976. The learning curve is famously steep, the joke is famously about not being able to exit, and the muscle memory once it sets in is famously hard to leave behind. I’ve tried Helix, I’ve tried Zed, I keep ending up back in vim.

# Open a file

vim path/to/file

# Inside vim:

# i enter insert mode (start typing to edit)

# Esc back to normal mode

# :w save

# :q quit

# :q! quit and discard changes

# :wq save and quit (also ZZ in normal mode)

# dd delete current line

# yy copy ("yank") current line

# p paste below current line

# u undo

# Ctrl-r redo

# /text search forward for "text"

# n / N next / previous match

# gg jump to top of file

# G jump to bottom of file

# :%s/foo/bar/g replace "foo" with "bar" everywhereThe exit-vim joke exists because if you’ve never seen a modal editor, every key produces unexpected results. The fix is Esc then :q! and pretend nothing happened. The first month is rough. The second month, you start to feel why people defend it so vigorously.

The single feature that finally made vim click for me was . (the dot command), which repeats your last edit. Combine it with motions like f" (find next double quote) and you can do “change inside quotes, then dot-repeat to do the same to the next three strings” without breaking flow. After that, every other editor felt like it was making me type too much.

If vim’s modal model isn’t for you, nano is the obvious alternative: WYSIWYG, no modes, all the keybinds shown at the bottom of the screen. I keep nano on every server I admin so that whoever takes over after me isn’t trapped.

Composing these tools with pipes

The point of this list isn’t memorising flags. It’s that each of these commands does one job, and they compose with pipes. Once you internalise that, the shell turns into a programmable text-processing pipeline.

A real-life example from yesterday. I needed to find the top 5 endpoints generating 500 errors in our nginx access log:

grep ' 500 ' /var/log/nginx/access.log \

| awk '{print $7}' \

| sort \

| uniq -c \

| sort -rn \

| head -5That’s grep filtering for HTTP 500 lines, awk extracting the URL field, sort | uniq -c counting occurrences, sort -rn ordering by count, head -5 taking the top 5. Six tools, one line, three seconds to run. Try doing that in a GUI.

A second example, this one for finding which of your committed files contain a TODO with someone’s name on it:

git ls-files | xargs grep -l 'TODO(hemant)' 2>/dev/nullgit ls-files lists every tracked file, xargs feeds them as arguments to grep -l, which prints just the filenames where the string appears. The 2>/dev/null suppresses errors from binary files grep can’t read. Five seconds across a 50,000-file repo.

The third pipeline I use weekly: extracting the unique IPs that have hit my server in the last 1,000 access-log lines:

tail -n 1000 /var/log/nginx/access.log | awk '{print $1}' | sort -uThe pattern stays the same: filter, extract, sort, dedupe, count. If a task fits that mould, the shell is faster than writing code. If it doesn’t (multi-line records, JSON, anything stateful), reach for jq, Python, or a real language. Knowing when to stop using shell pipes is as important as knowing the pipes themselves.

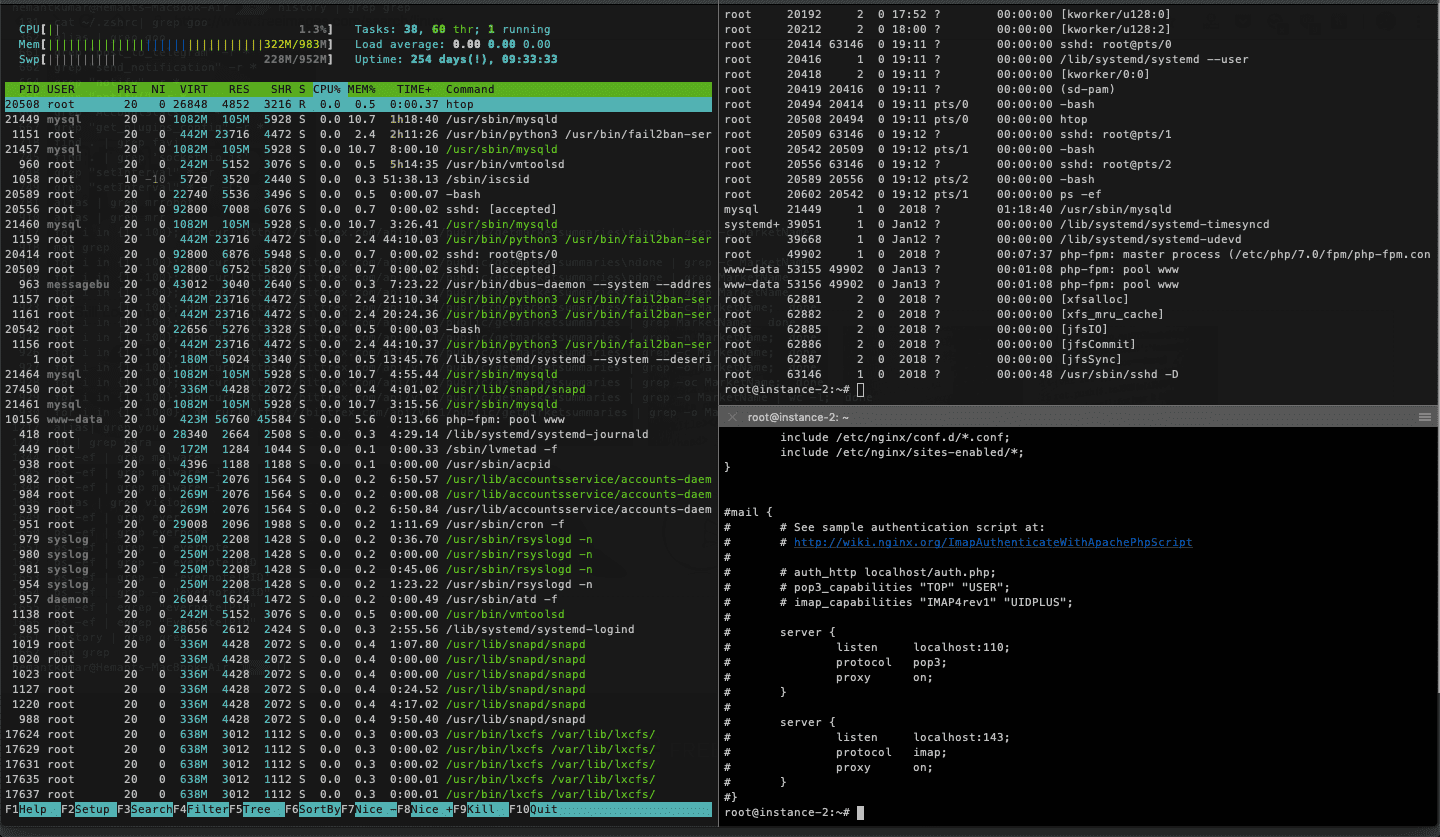

If this list felt useful, the file manipulation commands post covers the file-side equivalent (cp, mv, find, chmod), and the common system-monitoring commands cover top, free, df, and friends. The full GNU coreutils documentation is the canonical reference for cat, sort, head, tail, wc, and friends. Together with this one, that’s 90% of what I do at the terminal on any given day.

The shell is the most undersold productivity tool in software. A year of leaning into it pays back the rest of your career.

Last updated: April 2024